Google recently released the latest iteration of its Panda update, called Panda 4.0, late May 2014. It will impact around 7.5% of all English search queries. As an SEO company, we are always asked to explain what it is and the impact such Google updates have on search engine optimisation and digital marketing in general.

So we have decided to take a stab at providing an in-depth explanation here, in a way that is simple enough for even non-SEOs to understand. Or we hope we did.

Feel free to refer to this article to explain to a non-SEO wants why the word Panda gets you all worked up. Or if you’re a marketing manager who needs to explain the intricacies of the Panda update to your boss, just send them a link.

It’s quite a long read, so we’ve added the links below to help you quickly navigate to the various sections of this article.

What is the Google Panda Update?

Google’s Panda update is a filter that sits on top of the regular Google algorithm and is designed to penalize websites with ‘low quality content’. It was first released on 24 February 2011, and has since gone through four major iterations.

A Panda by Any Other Name

For the uninitiated, Google’s naming convention for its updates can be a bit confusing. Google’s Panda update was initially referred to as the ‘Farmer’ update since it targeted content farms (a term coined by Danny Sullivan of Search Engine Land). Content farms are websites such as those by Demand Media that have a large number of typically low quality content on their sites. However, internally, it was referred to as ‘Panda’, after one of the key engineers that worked on the update. That was the name that stuck.

The Panda update that was released on 19 May 2014 is now referred to as Panda 4.0 because it was the fourth major iteration to the algorithm. You may also find references to Panda 5, or 10 or 20 (up to 25, I believe), since some websites gave each Panda update (regardless minor or major) its own whole number. Thankfully, that practice has stopped.

List of Panda Updates

If you’re interested in the complete list of Panda updates over the years, here you go:

- Panda Update 1, 24 February 2011 (11.8%)

- Panda Update 2, 11 April 11 2011 (2%)

- Panda Update 6, 12 August 2011 (6-9%)

- Panda Update 8, 19 October 2011 (2%)

- Panda Update 9, 18 November 2011: (1%)

- Panda Update 12, 23 March 2012 (1.6%)

- Panda Update 15, 9 June 2012: (1%)

- Panda Update 16, 25 June 2012: (1%)

- Panda Update 17, 24 July 2012: (1%)

- Panda Update 18, 20 August 2012: (1%)

- Panda Update 19, 18 September 2012: (0.7%)

- Panda Update 20 , 27 September 2012 (2.4%)

- Panda Update 21, 5 November 2012 (1.1%)

- Panda Update 22, 21 November 2012 (0.8%)

- Panda Update 23, 21 December 2012 (1.3%)

- Panda Update 24, 22 January 2013 (1.2%)

- Panda Update 25, 15 March 2013

How often is the Panda Unleashed?

The Panda algorithm used to be rolled out every few months, but it has since matured enough to be incorporated into Google’s main algorithm and is launched monthly. It takes about 10 days for Panda to roll out completely.

What does Google’s Panda Do?

Before Google let its Panda out of its zoo, content farms such as eHow and Associated Content dominated the SERPs with their low quality, mass produced articles. This proliferation of thin content was most likely due to Google rolling out its Caffeine update in an attempt to make its search results ‘fresher’. Panda was released to rectify this situation.

As we’ve mentioned previously, Google’s Panda penalises websites with low quality content. But what exactly does Google mean by ‘low quality content’? Here are the most likely triggers:

- Duplicate or Near Duplicate Content – Content that is exactly or almost the same on any other page on the web. This is a common problem among e-commerce sites as they typically just copy and paste their principal’s or manufacturer’s product descriptions.

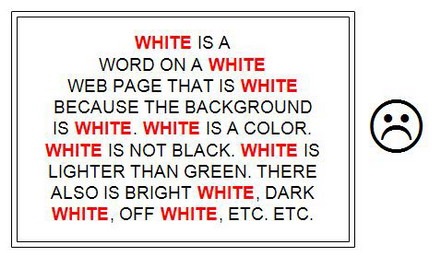

- Keyword Stuffing – Overly frequent appearance of targeted keywords on a particular page. This used to work back in the good old days, but keyword density is not a major factor now.

- Content That’s Not Useful – The content doesn’t fully satisfy the searcher’s informational needs and only briefly touches on the subject. This usually content that is written specifically for search engines, are badly written and not well-thought out, and have low word count.

- Bad Engagement Metrics – It’s quite likely that Google is using user engagement metrics such as bounce rate and time on site to determine the quality of a particular page. Your website might appear to be of low quality if your bounce rate is too high.

One of the most dangerous problems about this particular update is that a Panda Penalty impacts a site’s rankings on the whole, and not only the individual page. The extent to which each page on a Panda-hit site is penalised varies though.

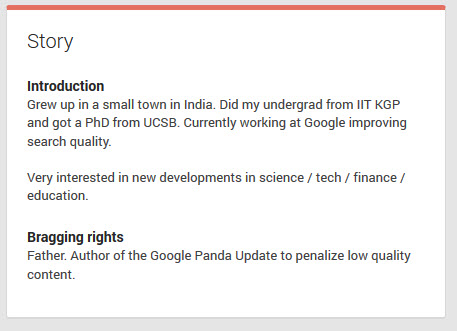

A Peak at Google’s Panda Patent

Although the first Panda update appeared in 2011, it seems that Google was only recently awarded a patent (# 8,682,892 B1) for it. You can see Navneet’s Panda name on the patent, although there is still some debate on whether this is the Panda algorithm or not. Also, just because Google has a patent for something, doesn’t mean it’s in use.

This section is more for geeks and SEO specialists, so feel free to skip it if you find it a tad dry.

How It Counts Links

The system determines a count of independent links for the group (step 302). A link for a group of resources is an incoming link to a resource in the group, i.e., a link having a resource in the group as its target. Links for the group can include express links, implied links, or both. […] An implied link is a reference to a target resource, e.g., a citation to the target resource, which is included in a source resource but is not an express link to the target resource. Thus, a resource in the group can be the target of an implied link without a user being able to navigate to the resource by following the implied link.

While the algorithm will determine the number of links to a particular page, it won’t give each of them the same value. It will look at the various links to determine if they were ‘independently made’ or not. Factors taken into consideration include web hosts, domain owners, style sheets, photos and others to determine if those links were made by the same website or website owner/s. If so, Google will not count those links. This is a not-so-veiled reference to link networks.

Furthermore, the algorithm will not count all the links as the same. Some links will flow more ‘link juice’, while others less. For example, getting a sidebar or footer link that is automatically populated across 100 pages of NYT.com is different from getting mentioned in 100 NYT articles.

Brand Searches are Important

The algorithm will also search for and count brand searches. These refer to any searches that include your brand name. For example, ‘cloudrock SEO’ or ‘cloudrock digital marketing’ are branded searches.

What it means is that once a user searches for a brand name and clicks on that website, it creates a connection in Google’s eyes. Over time, Google will understand that the query is a brand name, and will use that within the context of all the other unlinked brand mentions on the web.

Role of Navigational Searches

Before we go into this section, let me give you a quick primer on the various kinds of user searches, categorised by user intent.

There are essentially three types of searches – commercial, informational and navigational. These can also be referred to as the ‘do-know-go’ method of classifying searches (see Search Quality Evaluator Guide below for link to the guide).

- Commercial (Do) – Keywords that have commercial intent such as ‘buy Samsung TV’ or ‘interior design firm Malaysia’. These keywords are towards the end of the buyer funnel. SEO and PPC specialists pay a lot of attention to these type of keywords

- Informational (Know) – Keywords where the searcher is looking for information rather than to make a purchase such as ‘What is the meta keyword tag?’ or ‘Difference between LCD and LED TVs’

- Navigational (Go) – The searcher already knows which website they want to visit. For example, someone searching for ‘Facebook’ is usually looking for Facebook’s official website. SEO experts typically ignore these type of searches because, well, what’s the point?

If a search query is navigational, then a website will never get penalized for that particular query. For example, Twitter will not get penalised for the keyword ‘Twitter’. Of course, if a website was under a manual penalty, they might not even rank for their brand name, but that’s a different story.

Navigational searches are users typing in a brand name in Google’s search engine to go to the brand’s official website. SEOs tended to ignore those.

It wasn’t long ago that SEOs were measured on the increase of non-branded, organic traffic. In fact, some SEOs still advocate this.

However, this shows that branded search queries are becoming increasingly more important, especially as a quality signal. This entails that a company is executing marketing activities other than SEO to increase the awareness of its brand and encourage brand loyalty.

The SEO (whether in-house or agency) doesn’t typically have any influence on this. But seeing that it might affect the ranking of his / her website, it seems that’s something that needs to change.

At the very least, the SEO needs to be in the loop with regards to the various other marketing activities the company is doing, and find ways to leverage that for SEO. This might sound obvious, but considering how siloed marketing departments usually are (and that SEO is typically outsourced here), this doesn’t usually happen.

Writing’s on the Wall for EMD Strategy

Another major implication of this patent is related to the still popular strategy of using exact match domains (EMDs) for websites. For example, if you’re trying to rank for ‘drug test kits Malaysia’ then you might decide to build your website on ‘drugtest.com.my’.

EMDs used to have a very distinct advantage when it came to ranking in the SERPs. Arguably, Google’s EMD update took care of that, but reports have shown that that particular update only had limited effect. But with the increasing importance of branded searches, these EMDs will find themselves at a disadvantage in the future. How is it going to get any navigational or branded search results? When it comes to choosing domains, it seems SEO considerations should not play a huge part anymore.

How does Google do This?

While Panda is an algorithmic penalty, the issue of what makes a particular page high quality and highly relevant to a search result is not easy to define mathematically. In its on-going efforts to refine their algorithm, Google hires an army of search quality raters to manually examine websites and rate them according to their quality and relevancy.

Human Search Quality Raters

Google employs Search Quality Raters to manually check websites according to certain guidelines. Yeah, these are actual human beings (possibly numbering in the tens of thousands) whom Google hires to help them rate how they are doing in matching users queries with the best source of information. It’s not easy to become one; these Search Quality Raters have to take what appear to be two very hard tests before they are hired.

On average, Google requires six testers to rate a site (rating choices were Vital, Useful, Relevant and Off-Topic or Useless) to reduce the chance of error or bias. If two or more testers differed about the rating to be given a particular site, then they must come to an agreement (via comments or debate) before submitting the site.

If you’re interested in learning more about these search quality raters, you can read this insightful article, ‘An Interview With A Google Search Quality Rater’. The article also provides an interesting look at how controlled the work environment is.

Based off the interview article, this appears to be how a search quality rater will evaluate a page:

- Check for spam signals such as hidden text, keyword stuffing etc

- Determine if the page satisfies the information needs of the searcher

- Then examine the design and layout. It’s not about whether the design is gorgeous, but if a user is able to find what they need on the page without getting lost

Of course, this is hardly definitive (a sample size of one is not statistically significant), so take it with a pinch of salt. In fact, take everything an SEO tells you with a pinch of salt.

Search Quality Evaluator Guide

We previously mentioned that these raters check each page against the criteria set forth within a guide. You can find the Search Quality Evaluator Guide here.

Google still has not completely solved the problem of low quality content appearing in its search engines results pages (SERPs). You can see how hard they’re trying though.

General Impact of Google’s Panda Updates

Google’s Panda update is one of the two major updates that completely changed the SEO landscape (the other being the Penguin update). In fact, when historians talk about SEO in the future, they can probably draw a clear distinction between SEO done before 2012 and after 2012.

It also probably fueled the current push towards content marketing, as scores of SEOs jumped onto the ‘quality content’ bandwagon. More about that later.

However, a general criticism of the Panda update is that there were too much collateral damage, and the ones most effected were the small business owners. Google responded by promising a ‘softer’ Panda.

Google Launches a ‘Softer & Gentler’ Panda 4.0

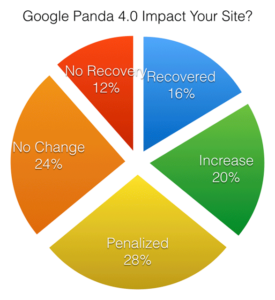

Google’s latest Panda update occurred on 20 May 2014 and is referred to as Panda 4.0. Matt Cutts claims that it is much softer and gentler, and early results seem to support that. An initial poll suggests that some websites have recovered from previous Panda penalties.

It still does pretty much the same thing – targeting websites with low quality content. There has been a few interesting developments with this latest update though.

Websites with Topical Authority Rewarded

Earlier in the year, Matt Cutts released a Webmaster Central video that hints that Google will be taking a new approach to measuring online authority.

In the video, he makes the distinction between popularity and authority – just because a site is popular (has a lot of visitors or social signals) does not necessarily mean that it is authoritative. For example, most people wouldn’t share governmental websites on their social networks, but they would link and reference them on their websites.

We also know that Google is moving towards rewarding credible authors with better ranking, and is in fact already using a form of author rank in its in-depth articles.

Panda 4.0 seems to be a step in that direction.

Currently, my observation is that sites that have their Google Authorship photo snippet displayed in the SERPs (2016 Update: this has since been discontinued) are judged to be more authoritative in certain subject matters. Note that I said ‘sites’, and not authors – Google still seems to be some way off from identifying authority for individual authors.

It seems like websites that are focused on a particular niche and provide a lot of content for that niche are ranking better than general websites that also cover a lot of other topics.

CognitiveSEO makes a good argument for this case.

They looked at the keyword ‘brown recluse’ and compared two websites – eMedicine (left) and LiveScience (right).

The eMedicine page was a big winner for Panda 4.0, winning 99 positions for this keyword. While both websites had high user activity, eMedicine’s website covers the topic in much more depth. It has articles on how to treat a brown recluse’s bite, symptoms, diagnoses and so on.

LiveScience does not cover the topic in as much depth. At the same time, its website also has articles on a wide variety of topics from 3D printing to fitness trackers.

This seems to suggest that Panda 4.0 favours websites that are more specialized.

Press Release Websites Penalized?

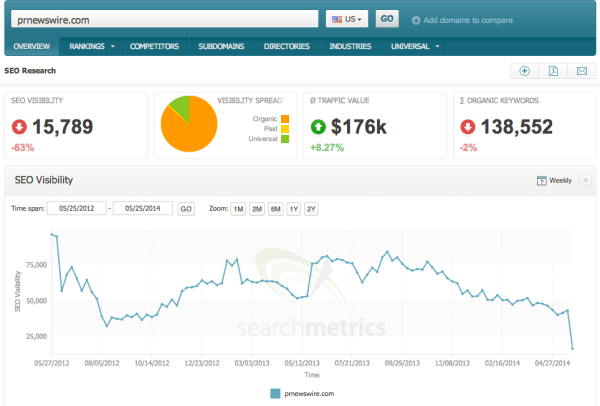

This is worth mentioning since many SEO companies in Malaysia are still advocating press releases as a major link building method. While this data isn’t conclusive, it seems that press release websites such as PRNewswire were heavily penalized during Panda 4.0.

Credit: Did Google Panda 4.0 Go After Press Release Sites?

High Profile Losers: eBay Loses 75% of Page #1 Rankings

One of the biggest losers was surprisingly eBay. It’s surprising because Google has a well-known bias towards big brands.

Credit: Panda 4.0, Payday Loan 2.0 & eBay’s Very Bad Day

The MozCast 10K shows that eBay’s ranking in its Big 10 list (the 10 domains that have the most share of page one rankings) dropped from #6 to #26. eBay used to have a 1% share of the approximately 100,000 search verticals MozCast 10K tracks, but it has now dropped to 0.28%. This is a huge drop as it shows that eBay lost a whopping two-thirds of its page one rankings.

eBay has gone from right at 1% of the URLs in Moz’s data set down to 0.28%, dropping more than two-thirds of the ranking real-estate they previously held.

However, on closer examination, you will find that eBay was using some shady tactics such as doorway pages, overly aggressive internal linking with rich keywords, and also thin content on those previously ranked pages.

If you’re into conspiracy theories, note that eBay recently released a report claiming that Google Adwords is ineffective for enterprise-level companies.

Panda 4.0 Update: Implications for Digital Marketers

Now that you have a good idea of what Google’s Panda 4.0 update is all about, let’s dive a bit deeper and see what it means for you, the aspiring digital marketer. In a nutshell, it’s almost impossible to do any SEO without keeping the user experience in mind.

Search Engine Friendly Website & Tight Site Architecture

As we’ve mentioned throughout this article, Google’s Panda is concerned with the various elements on your website itself (not to be confused with the Penguin update, which looks at your link profile). This refers to technical on-page factors as well as your site architecture.

- Ensure Your Website Isn’t Compromised – Check your website to ensure that it hasn’t been hacked or compromised in any way. Some hackers will leave links to ‘bad neighbourhoods’ in the code which aren’t very obvious. You want those cleaned up.

- Make Every Page Useful – Each page on your website should provide value to the user. You don’t want to have any redundant pages which could lead to a duplicate content penalty, Redirect or 404 pages that you think have thin content.

- Fix Broken Links & Crawl Errors – Check your Google Webmaster Tools account or use a tool such as Screaming Frog to find and fix the errors on your website. This will help search robots better crawl your website. Also ensure that files such as your XML sitemap don’t reference those broken links.

- Improve User Engagement – Check your Google Analytics to find out which pages receive the highest bounce rates. Fix them, by either dropping those pages entirely (404) or by improving the content to make it more appealing. Conversely, look at those pages that receive the most shares or highest time on page to help you determine what your readers like.

- Examine Google’s Webmaster Guidelines – Google has released a guideline that sheds light on the question of what a good quality website should be. You can find that here. This Webmaster Central post is also quite enlightening.

The tips above aren’t exhaustive and only serve to provide a high level view on which areas you might want to concentrate on. When you start to dive deeper into the actual implementation, you’ll find that there are a thousand little things that you could improve on. This gets even more complex when you consider factors such as international SEO or e-commerce websites.

You Need Content to Rank

You need content in order to rank. There is no question about it. That is unless you’re planning to engage in black hat SEO (which in all fairness, can still work today – for a while anyway) and can stomach the risk of one day being stomped on by any one of Google’s furry friends.

I’m beginning to be wary of the phrase ‘quality content’. When SEOs say that they’re going to provide unique and quality content, it’s usually anything but. A quality article is much more than 600 words strung together in a grammatically correct way (with keywords peppered all over it). ‘Unique’, another word that is bandied around, means that the article will not be penalized for being duplicate content (usually through a Copyscape check). If you’re lucky, this means that it has been manually rephrased; if you’re not, then the article has been spun.

Consider that Google’s core business – a multi-billion dollar core business – is about returning the most relevant and authoritative search results for a particular query. If you have aspirations to rank on the first page of Google for competitive terms, then the question you should ask yourself is – Does my content satisfy the user’s needs?

I would define quality content as:

- Useful – Able to satisfy the user’s information needs. If you work towards this, you will find that 600 words barely make the cut.

- Builds Trust – That can, over time, help you build a relationship with the reader. This includes having your own ‘voice’.

- SEO in Mind – Having SEO considerations firmly in mind at the start.

This is no easy feat. ‘Create quality content’ isn’t simply an item on a checklist.

One of our clients had a great response when we brought up the concern that they might be underestimating how difficult it is to create quality content. According to one of their director’s, ‘It’s not that we’re underestimating it. We know how insanely difficult it can be. That’s why we want to look at some other ways to increase our rankings first before we start on the content.’ (I’m paraphrasing, of course).

From our experience so far in both the Singaporean and Malaysian markets, this usually involves a cultural change within the organization itself, or at the very least within the marketing team. Small and medium enterprises are typically sales-focused, and the shift to content marketing is very hard to justify in terms of immediate return-on-investment (ROI).

Every company we’ve met in Malaysia wants to do white hat SEO. Which CEO would want the brand they have worked so hard for to be associated with a Google penalty? The problem is that most do not understand what’s involved when you want to rank your terms in a white hat manner.

The misinformation being churned out by some other less-informed SEO companies isn’t making it easier. For example, ‘No, we should only do on-page optimisation because link building is too dangerous‘ or ‘Just give me your keywords and I’ll rank them. You don’t need to do anything.‘ Then, there are simply those running link networks and passing off their services as ‘white hat’. But I’ll save those for another post.

So, back to the topic on hand – what’s typically involved in getting your organization to start creating content? At the very least, you need to:

- Understand Your Market – You need to have a firm understand of your firm’s personas, the type of keywords they search for at each stage of the buying funnel, and to create content that specifically targets those keywords or keyword clusters.

- Create a Plan – You need to create a plan, taking into consideration your persona’s informational needs, keywords they use and the stage of the buying funnel they’re in, to know what kinds of content to create that would appeal to both human and robot readers

- Put a Process in Place – You need a process in place in order to schedule, create, edit, publish and promote that content

Use Structured Data

With the introduction of Google’s Hummingbird update, we know that they are moving away from keyword-based searches to entity-based searches.

They want to have a deeper understanding of the intent and context of a particular search query. This entails creating entities out of seemingly disparate information on the web.

The screenshot above is an example of an entity. Google recognizes Martin Luther King as a person, and when you search for his birthday, you can find it directly in the SERPs themselves.

One of the ways they’re doing this is through the use of structured data such as Schema.org. In a nutshell, structured data is about placing certain HTML tags on your website to tell Google what that particular information is referring to.

For illustration, see how you can implement structured data for YouTube videos here.

Marking up the content in your website makes it more visible within the SERPs themselves. This will in turn increase your click-through-rates (CTRs) and traffic.

A disclaimer here is that Google doesn’t currently use structured data as a ranking signal. Even so, it is still a worthwhile investment of your time.

Work towards Building a Brand Online

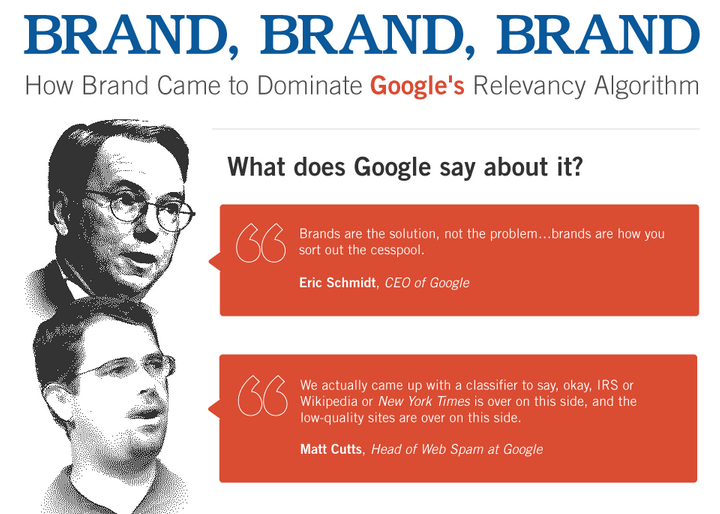

We already know that Google has a bias towards brands. According to Eric Schmidt, Google’s CEO, ‘Brands are the solution, not the problem… brands are how you sort out the cesspool.’ And here’s some advice from Rand Fishkin of Moz.

The release of Panda’s patent adds further fuel to the argument. In it, it mentions that Google can consider non-linking citations as ‘implied links’.

I’m not going to go into detail on how to build a strong online brand here (our editor’s already annoyed at the length of this post). So I’m just going to describe some differences between a brand and a generic website.

Brands

- Real people at a physical address

- Authentic, active social accounts

- Complete contact info

- Registered with govt websites

- Diverse traffic sources

- Branded search queries

- Offline marketing campaigns

Generic Websites

- Usually exist only online

- Not significant social accounts

- Email forms only

- ‘Under’ radar

- Search typically 90%

- Little branded search queries

- No offline marketing activities

Welcome to the New Normal in SEO

Google’s Panda 4.0 update is just the latest in a long list of updates that have completely changed the SEO industry. Many are struggling to keep up, let alone thrive.

While this is a pretty long read, we feel that it barely scratches the surface of the Panda update and its implications for business owners and digital marketing managers. Over the next few months, we hope to shed more light on how businesses can do better digital marketing.

Let us know how we did.

Did you find this article useful? Did we miss too many things out? Let us know in the comments below.